What is the Learning Unit about?

In our enthusiasm for innovation, it is easy to forget that new technologies can also cause harm. As captured in the old Latin adage primum non nocere (“first, do no harm”), moving fast is not inherently virtuous if it comes at the cost of unintended consequences. Even well-intentioned actions can end up causing more harm than the problem they aim to solve.

The Responsible Use of Digital Tools learning unit invites participants to engage with this tension directly. It encourages them to recognise and reflect on the modern forms of harm associated with digital technologies in healthcare. These include violations of privacy, the exacerbation of existing health inequities, the erosion of patient autonomy, and even the gradual loss of human relationships in care. Alongside developing the ability to discuss these ethical dimensions, students are also introduced to the legal frameworks that exist at the European level – such as GDPR, MDR, and the EU AI Act – which aim to safeguard patients in an increasingly digital healthcare landscape.

Importantly, the learning unit is deliberately practice-oriented. Rather than focusing on abstract or overly technical legal provisions, it introduces concrete tools such as the FUTURE-AI framework. These checklists help students focus on the kinds of practical questions they, as future healthcare professionals, should be asking developers and technology providers before integrating new tools into clinical practice.

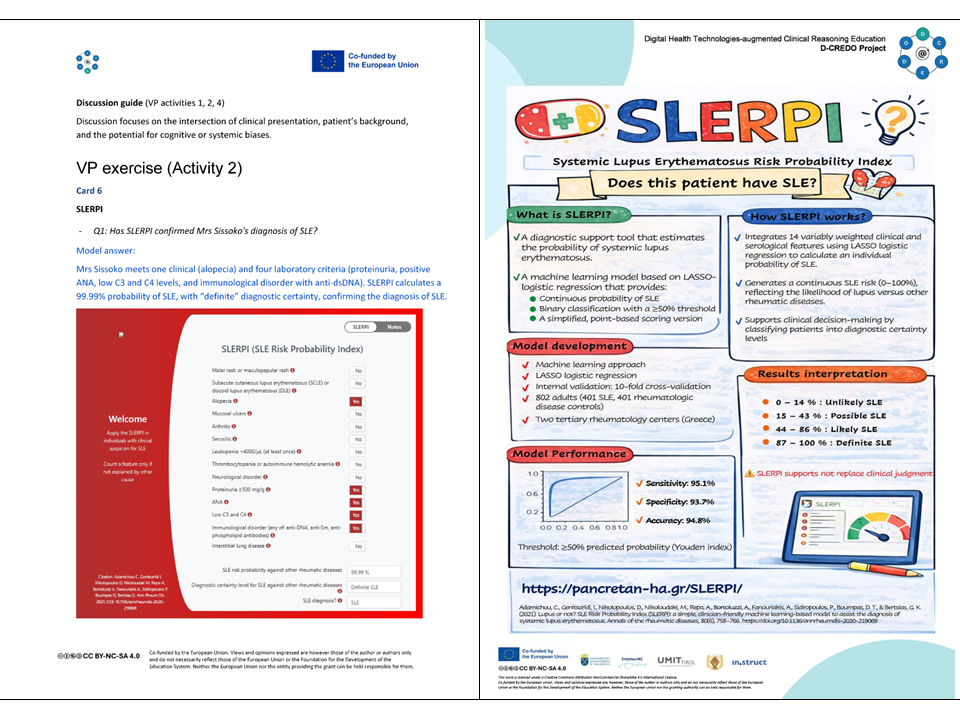

To ground these ideas, the unit is built around the clinical case of Hélène Sissoko, a 34-year-old night bar singer who is ultimately diagnosed with systemic lupus erythematosus (SLE). SLE itself presents a clinical reasoning challenge: its symptoms develop gradually, overlap with many other conditions, and there is no single definitive test. This complexity is further compounded by well-documented systemic disparities, as women of Black ethnicity are often disadvantaged in both the diagnosis and management of the disease.

As learners work through Hélène’s case, they are encouraged to identify potential sources of bias, particularly in digital tools used for diagnosis and prognosis. These tools, often built on large datasets and machine learning models, raise important questions about fairness and representation. Because these discussions can quickly become highly technical, students also practise communicating these issues clearly and transparently with patients – an essential step in maintaining trust and ensuring informed consent.

After completing this learning unit, students gain the ability to critically appraise digital health tools, identify potential sources of bias and inequity, and communicate associated risks clearly and transparently to patients. These skills are essential for ensuring that the integration of digital technologies into clinical practice remains safe, equitable, and patient-centred.

How We Developed It?

We know that students value authenticity in their learning experiences. With this in mind, we set out to make the ethical and legal dilemmas in this unit feel as realistic as possible. To do so, we explored real-world examples by reviewing popular press coverage and consulting the AI Incident Database (https://incidentdatabase.ai). This allowed us to identify situations where digital tools in healthcare caused harm rather than benefit – and, crucially, to understand why.

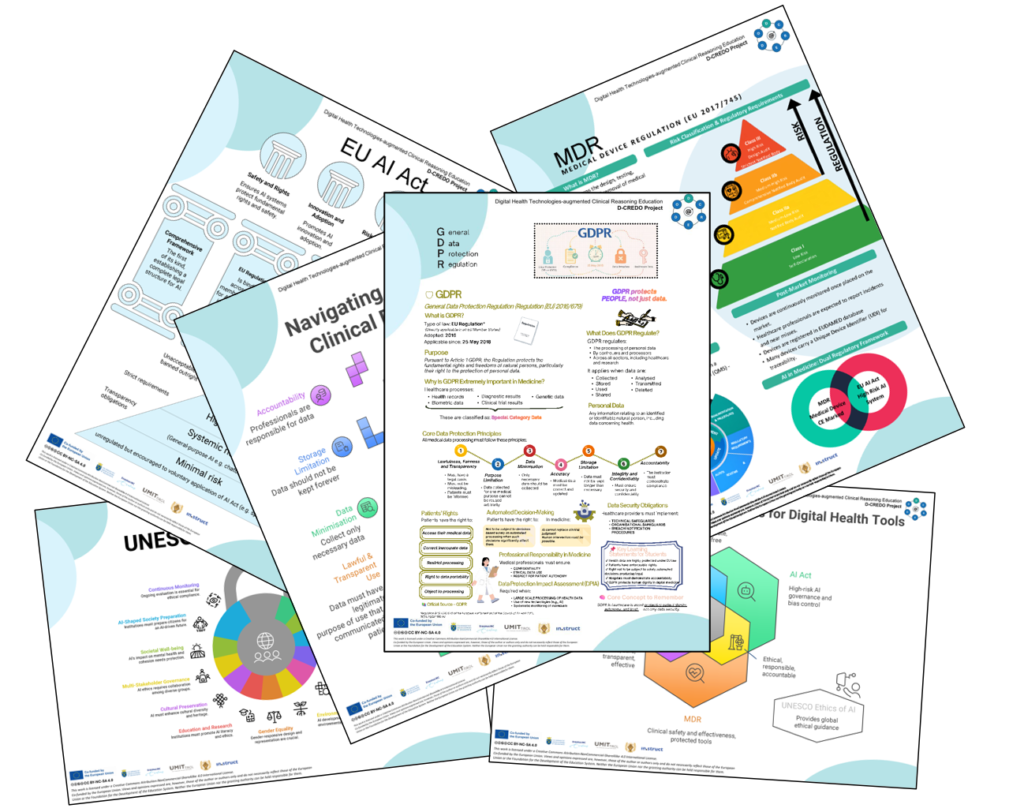

Another key consideration was accessibility. Legal texts are often dense and difficult to engage with, which can discourage students from fully interacting with them. To address this, we adopted a strategy familiar to many learners: the use of “cheat sheets.” We designed infographic-style summaries of key European legal frameworks, enabling students to quickly grasp essential concepts without becoming overwhelmed by technical detail.

Finally, we aimed to ensure that all digital tools integrated into the virtual patient experience were freely accessible. Through an extensive literature review on the use of AI in SLE, we identified publicly available machine learning models, such as SLERPI, as well as open datasets published alongside academic articles. These resources were incorporated into the learning unit as practical, hands-on examples, allowing students to move beyond theory and engage directly with real tools.

What did we learn?

A common perception is that regulation – particularly in the context of AI – stifles innovation and represents unnecessary bureaucracy. While the long-term impact of these frameworks is still unfolding, our exploration of the AI Incident Database suggests a more nuanced picture. There are numerous documented cases in which new technologies have caused harm when implemented without sufficient oversight. From this perspective, regulation does not simply constrain innovation. It plays a critical role in ensuring that innovation is safe, equitable, and trustworthy.

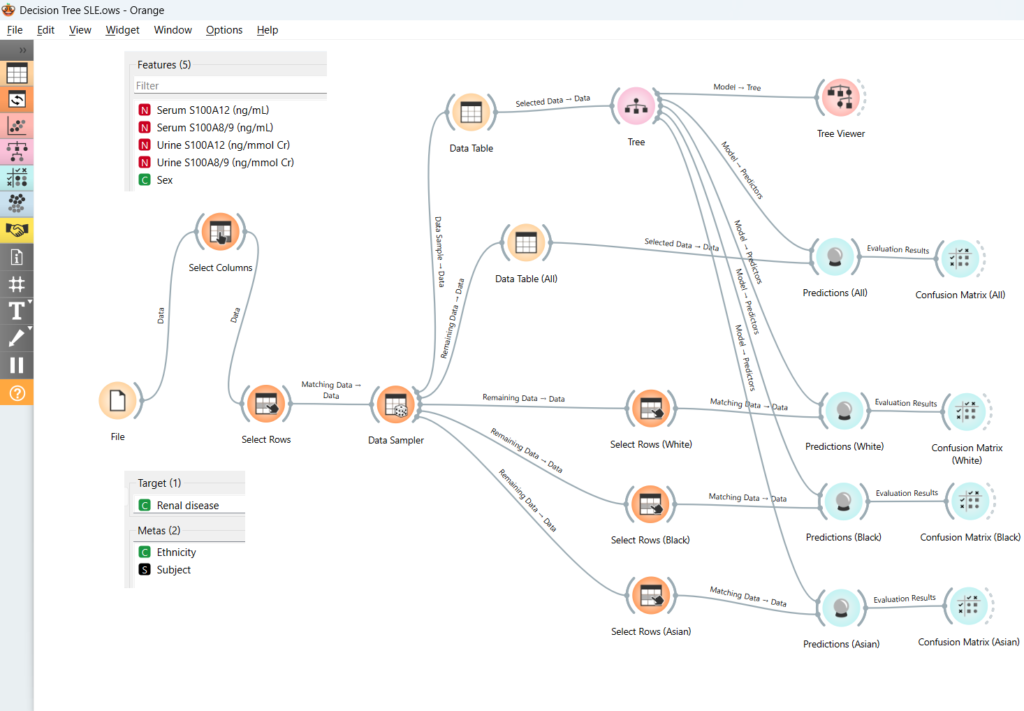

The process of developing this learning unit also reinforced an important point: it is not enough to evaluate a digital health tool based solely on personal experience. A tool that works well in one context may simultaneously disadvantage other groups. While we explored this through the example of Black patients in the context of SLE, similar concerns extend to many other populations, including older adults, individuals with disabilities, people with low digital literacy, non-native language speakers, and those living in rural or underserved areas.

Finally, we were reminded that, despite common assumptions, machine learning tools are not necessarily inaccessible. Our experience with the visual data mining tool Orange Data Mining was particularly encouraging. Developed by the University of Ljubljana, it is freely available across multiple operating systems and provides a user-friendly entry point for conducting fairness analyses of AI systems. This reinforces the idea that engaging critically with digital tools is not only necessary, but increasingly feasible for students and practitioners alike.

Stay connected with D-CREDO and follow our journey on LinkedIn for more updates, insights, and stories.